Production-scale inference profiler to optimize your AI stack across models, engines, GPUs, and other accelerators.

NVIDIA PyTorch

PyTorch vLLM

vLLM SGLang

SGLang TensorRT

TensorRT

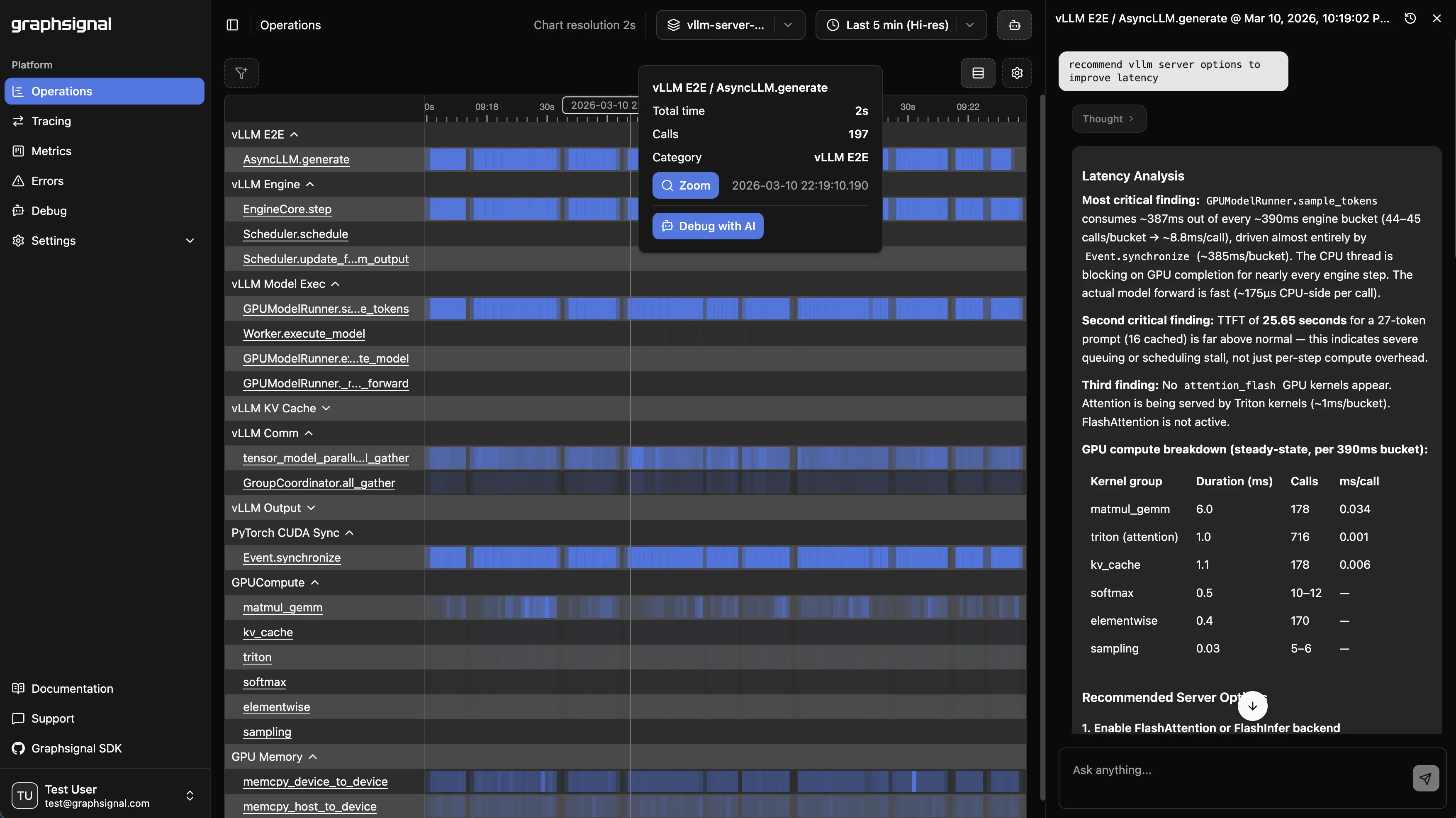

Inference profiling

Continuous, high-resolution profiling timelines exposing operation durations and resource utilization across inference workloads.

LLM tracing

LLM generation tracing with per-step timing, token throughput, and latency breakdowns for major inference frameworks.

System metrics

System-level metrics for inference engines and hardware (CPU, GPU, accelerators).

Error monitoring

Error monitoring for device-level failures, runtime exceptions, and inference errors.

AI optimization

Inference telemetry for AI agents to identify bottlenecks and drive targeted improvements across the inference stack.